-

Services Beyond education, our enterprise arm, MT Research Labs, partners with organizations to design secure, production ready AI solutions tailored for real world business use. We design and deploy locally hosted, enterprise grade private AI systems that run fully on your own infrastructure. Operate multiple large language models offline with complete data sovereignty, secure AI workflows, and scalable performance. Our platform integrates Retrieval Augmented Generation for instant answers from your internal documents, Model Context Protocol for real time research and intelligence, and private AI image and video generation. Powered by high performance hardware, your data stays private, fast, and fully under your control. Heres a list of what we do: AI Applications – Operate multiple large language models offline to access advanced AI capabilities without internet dependence or subscription platforms. All processing stays on your local network, giving you full control over data privacy and security. SMB grade LLM – Suitable for [...]

-

Locally Hosted AI Deployment AI Applications – Operate multiple large language models offline to access advanced AI capabilities without internet dependence or subscription platforms. All processing stays on your local network, giving you full control over data privacy and security. SMB grade LLM – Suitable for Small and Medium Businesses with limited resources, fewer staff, and lower IT infrastructure investment. Cost-efficiency, ease of use, and quick AI deployment, On-premise language models, Data sovereignty. Retrieval-Augmented Generation Feature – Let AI access your knowledgebase so it can search your documents, PDFs, and images instantly, delivering answers powered by your own data. AI assistants that can answer employee questions using company-specific guides, FAQs, and internal reports. Providing accurate information from internal documentation to answer internal questions about a specific product or policy. AI Chatbots – Useful for internal teams, offering 24/7 access to Knowledgebase Documentation, FAQs, or task assistance without human intervention. AI website chatbots can streamline [...]

-

What is MCP? MCP servers can act as a lightweight, static AI agent that browses the web to fetch the latest information on open-source LMs — without requiring full model inference. Since they’re static, they’re not meant to generate responses — just gather, cache, and serve info — making them ideal for non-interactive use cases. Perfect for supporting internal teams, developers, or users who need real-time context on open-source models. Think of them as a “browser + knowledge updater” — not a chatbot, not a model — just a tool that keeps you informed. Model Context Protocol is an open standard that lets apps talk to external tools in a structured, secure way. Think of it as a universal adapter: it allows AI models to access local tools, APIs, databases, search engines, or custom scripts through a consistent protocol. Instead of every app inventing its own plugin format, MCP creates [...]

-

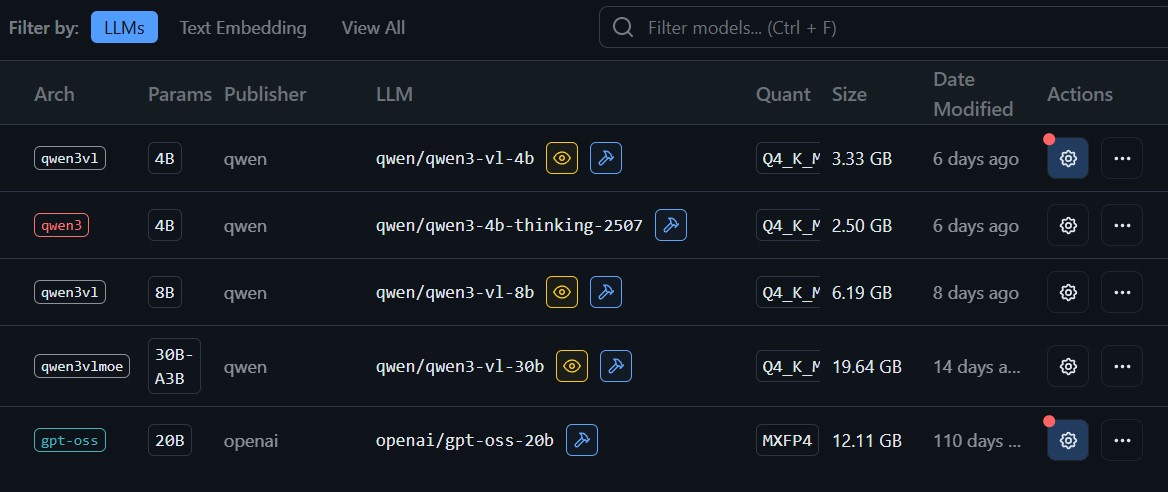

Running a local LLM gives you full control of your data, which is critical for sectors that handle sensitive information. A law firm can review contracts, draft clauses, or summarise case notes without sending confidential documents to external servers. A finance team can analyse reports, prepare statements, or explore forecasting ideas while keeping client records completely offline. Everything stays inside your machine, protected by your own security policies. This setup delivers fast performance, total privacy, and a safer way to use AI for high trust industries that cannot risk leaks or exposure. You also don’t need a monthly subscription to use ChatGPT. Choose your Own LLM Model Running a local setup lets users pick the exact model that fits their workflow. Here are some of our favourite models, and their benefits. Alibaba Qwen3 VL 30B The latest generation vision-language MoE model in the Qwen series with comprehensive upgrades to visual [...]

-

Qwen ComfyUI Explained: Benefits to Building locally hosted Pipelines Our team from MT Research Labs, part of Mind Theory, use Qwen-Image-Edit. This ComfyUI pipeline simultaneously feeds the input image into Qwen2.5-VL (for visual semantic control) and the VAE Encoder (for visual appearance control), achieving capabilities in both semantic and appearance editing. https://www.mindtheory.sg/wp-content/uploads/qwen-multi-angle.mp4 In short, the use case for such a workflow has benefits such as Changing Angle of a Person/Character/Scene, while still maintaining consistency of clothes and facial features Changing Expression of a Actor/Person/Character, without going back to reshoot photography or video Wan 2.2 Animate (Image to Video) A model developed by Alibaba that enables the transformation of static images into short video sequences. Wan2.2 represents a significant leap in motion generation capabilities, trained on a substantially expanded dataset—featuring 65.6% more images and 83.2% more videos compared to its predecessor. This enriched training data dramatically improves the model’s ability to [...]

-

RunwayML Act-One generates captivating animations from video and voice inputs, advancing the use of generative models for dynamic live-action and animated storytelling. No Longer a Tedious Process Classic facial animation pipelines are like high-stakes marathons for animators—think motion capture suits, endless video references, and tedious face rigging all mashed together. The mission? To squeeze an actor’s expression into a 3D model that doesn’t look like a robot on its day off. The real trick is to somehow keep every eyebrow raise and smirk intact, which is kind of like moving an entire orchestra note-for-note into a kazoo. Challenging? Yes! Act-One follows a unique pipeline, relying solely on an actor’s performance—no extra equipment needed. Live Action The model shines in creating cinematic, realistic outputs with high-fidelity face animations, adapting well to various camera angles. This enables users to build lifelike characters that convey true emotion, deepening audience engagement. We’re thrilled to [...]

-

ComfyUI Gen AI Course (Adult) Open for personal, corporate and tertiary signups. Corporate Group Bookings available. Useful for inhouse creative teams. Ages 21-Above (2:00pm-6:00pm) (Class size of approx. 5-7 students) (Nearest MRT is Tai Seng (Exit B), Building has parking available.) The Generative AI course offers a comprehensive lesson plan for adults, designers and working professionals to learn about the power of ComfyUI with Flux Dev Model. Being the only course provider in Singapore to teach ComfyUI, our toolset workflow includes “Dual Clip Loader” node, which is used to for text encoder and embeddings, including options for both clip_l.safetensors and t5xxl fp 16 safetensors. The t5xxl fp 16 safetensors in this context refers to the larger, more powerful text encoder used in Stable Diffusion 3 and related models. Flux Dev is a powerful 12-billion parameter text-to-image model developed by Black Forest Labs. It is designed for both research and development [...]

-

Generative AI art refers to artworks created with the help of Generative Artificial Intelligence models. These models are designed to generate new content, such as images, based on prompts, controlnet and node workflows. Below are some examples of what it can achieve. Mind Theory, as a Learning Centre immersed in cutting-edge technologies, integrating generative AI in educational content creation promises an exciting future. The fusion of creativity and artificial intelligence opens doors to a new era of personalized and effective learning. Scribble to Image Using Scribble method in Controlnet, we input a quick doodle of a character, and chose a manga training model. Through this method, we achieve the capability to produce a nuanced and shaded output, meticulously crafted in accordance with our specified prompts. DWOpenpose to Image DWPose is used for human whole-body pose estimation. Using the editor, we can articulate the limbs at any desired angle. Lineart Sketch to Manga This [...]

-

In the realm of Generative AI, "LoRA" typically refers to Latent Optimisation for Reinforcement Learning with Advantage Estimates. LoRA is a novel approach that combines the power of reinforcement learning with latent variable models, aiming to enhance sample efficiency and performance in complex environments. It leverages latent variables to capture underlying structures, allowing the model to learn effectively from limited data. Example of LoRA of Hedge Sculpture LoRA optimizes these latent variables to maximize expected rewards, utilizing advantage estimates to guide the optimization process. This technique holds promise for addressing challenges in reinforcement learning tasks by efficiently exploring the latent space of possible solutions. Mind Theory, as a Learning Centre immersed in cutting-edge technologies, integrating generative AI in educational content creation promises an exciting future. The fusion of creativity and artificial intelligence opens doors to a new era of personalized and effective learning. LoRA Panel in SD Automatic1111 Efforts of [...]

-

Generative AI enables architects or product designers to explore a vast array of design alternatives quickly. By inputting parameters and constraints, architects/product designers can use AI to generate diverse design options, pushing the boundaries of traditional architecture and fostering creativity. Integrating generative AI in research promises an exciting future. The fusion of creativity and artificial intelligence opens doors to a new era of experimental research work. Gen-AI offers a promising tool to enhance collaboration among architects and project stakeholders. By generating diverse options based on specific criteria, architects can present clients with a spectrum of creative solutions, considering sustainability and community impact. Ensuring designs are feasible structurally is vital; Gen-AI's rapid options may overlook engineering constraints. Partnering Gen-AI with human expertise unlocks experimentation. As technology evolves, expect more applications, from energy efficiency to intricate project collaborations, shaping the future of architectural design. For Architectural Use | Lineart Sketch to Image The [...]

-

Stable Diffusion is a latent diffusion model, a kind of deep generative artificial neural network. Its code and model weights have been released publicly, and it can run on most consumer hardware equipped with a modest GPU with at least 8 GB VRAM. This marked a departure from previous proprietary text-to-image models such as DALL-E and Midjourney which were accessible only via cloud services. Unlike free cloud-based AI programs such as StableDiffusionWeb, Dall-E, Bing Image Creator, Leonardo or Lexica, Stable Diffusion Automatic1111 stands out distinctly. Its unmatched capability to produce highly personalized and unique images sets it apart in a league of its own. Advantages: Training Data: Models, sometimes called checkpoint files, are pre-trained Stable Diffusion weights intended for generating general or a particular genre of images. Fine-Tuning: Fine-tuning is a common technique in machine learning. It takes a model that is trained on a wide dataset and trains a bit more on a [...]

-

Drag Your GAN: Interactive Point-based Manipulation on the Generative Image Manifold It is a method for manipulating generative adversarial networks (GANs) through a user-friendly interface. In this context, "drag" implies the ability to interactively change or control aspects of an image or model by simply clicking and dragging points on a user interface. This approach makes it possible to intuitively alter generated images or data patterns in real-time, offering a hands-on experience with AI and machine learning model adjustments. This can be particularly useful in fields like graphic design, game development, or any creative industry needing precise control over AI-generated outputs. "Drag Your GAN Interactive Point-based Manipulation" could indeed influence tools like Photoshop by integrating advanced AI-driven features. This kind of technology allows users to manipulate images with a level of detail and creativity that traditional tools might not easily allow. By incorporating GANs, Photoshop could offer more intuitive, real-time [...]

-

OpenAI GPT-4o: Discover the latest in voice assistant technology, innovative vision capabilities, and all the essential updates you need to know. The advent of ChatGPT-4o marks a significant milestone in the field of artificial intelligence. GPT-4o ("o" for "omni") represents a significant advancement in natural human-computer interaction. It can take in text, audio, image, and video inputs, and produce outputs in text, audio, and image formats. It processes audio inputs remarkably fast, with response times as low as 232 milliseconds and an average of 320 milliseconds, closely mirroring human conversation speed. In terms of text and code performance in English, GPT-4o matches GPT-4 Turbo, and it excels further with non-English languages. Additionally, it's much faster and 50% more cost-effective via API. Notably, GPT-4o surpasses existing models in vision and audio comprehension. OpenAI Spring Update Currently, its primary advantage lies in introducing powerful reasoning, processing, and natural language capabilities to the [...]

Established in Mar 2023, we are Singapore’s pioneering AI Education Provider, offering Gen AI holiday camps for children, teens, alongside Adult corporate workshops and Singapore Secondary School programs. Our parent company Critica, founded in 2005, is a leading Singapore motion design studio with decades of experience producing high impact creative work for the advertising industry.

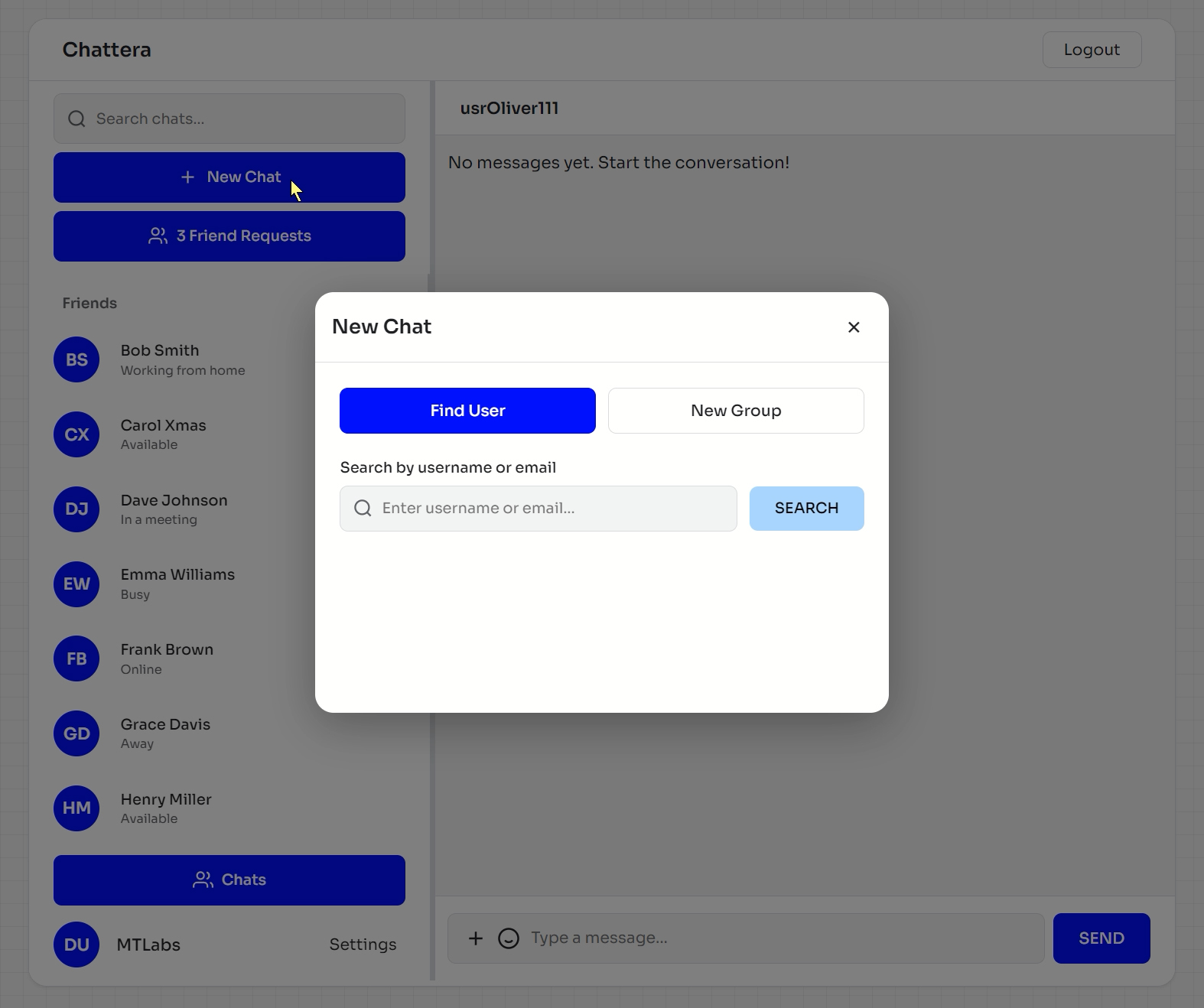

Beyond education, our B2B arm, MT Labs, partners with organizations to design secure, production ready AI solutions tailored for real world business use. We design and deploy locally hosted, SMB grade private AI systems that run fully on your own infrastructure. Operate multiple large language models offline with complete data sovereignty, secure AI workflows, and scalable performance. Our platform integrates Retrieval Augmented Generation for instant answers from your internal documents, Model Context Protocol for real time research and intelligence, and private AI image and video generation. Powered by high performance AMD Ryzen 9 and NVIDIA RTX 5090 hardware, your data stays private, fast, and fully under your control.